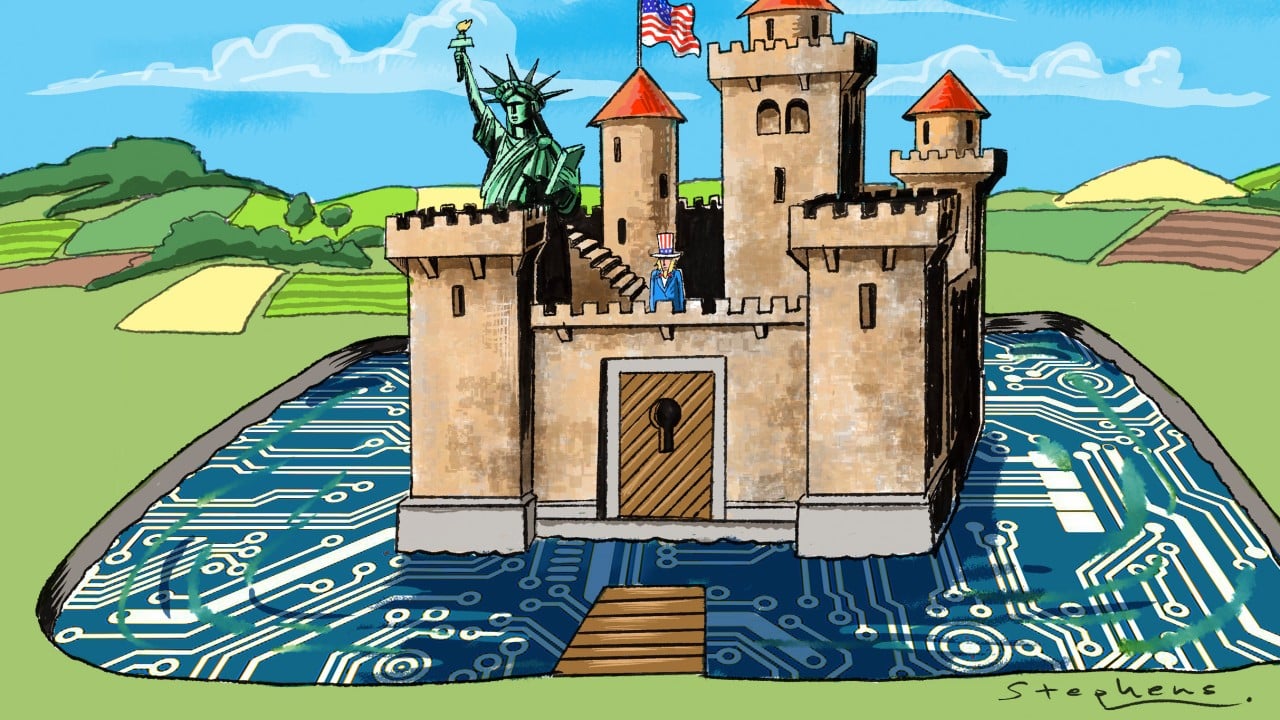

The prevailing wisdom regarding the US-China AI race is a terminal case of "both-sidesism." Policy wonks and corporate lobbyists love to drone on about the "delicate balance" between national security and open-source collaboration. They suggest we can protect the crown jewels of Silicon Valley while simultaneously reaping the benefits of global academic exchange.

This is a fantasy. It is the intellectual equivalent of trying to stay dry while scuba diving.

If you are talking about "striking a balance," you have already lost. The friction between hoarding intellectual property and participating in the global AI ecosystem is not a problem to be solved; it is an irreconcilable conflict where one side must eventually cannibalize the other. The "lazy consensus" suggests that we can put high fences around "frontier models" while keeping the gate open for "general research."

I have watched dozens of firms burn through eight-figure venture rounds trying to navigate these export controls. The result? They don't get security, and they certainly don't get openness. They get a bloated compliance department and a product that is obsolete before it clears legal.

The Open Source Troejan Horse

The argument for openness usually centers on the idea that "democratizing" AI prevents any one nation—or company—from becoming a digital hegemon. It sounds noble. It’s actually a strategic blunder.

When Meta releases Llama, or when researchers publish the architecture for a new transformer variant, they aren't just "fostering" (to use a word I despise) innovation. They are providing the blueprint for their own disruption. In a geopolitical context, "open" is just another word for "stolen."

The CCP does not operate on the same timeline as a quarterly earnings report from Menlo Park. They view open-source contributions from Western labs as a free R&D department. Why spend $10 billion on base model training when you can wait six months and fine-tune a leaked or open-weights model for a fraction of the cost?

The data confirms this lag is shrinking. In 2022, the gap between state-of-the-art US models and Chinese equivalents was roughly 18 to 24 months. By mid-2024, that gap narrowed to less than 6 months for specific benchmarks like GSM8K or MMLU. Openness didn't create a "tide that lifts all boats." It created a slipstream for a competitor that doesn't have to worry about copyright law, safety alignment, or pesky ethical boards.

The Compute Fallacy

We are told that controlling the hardware—the H100s and the upcoming Blackwell chips—is our "strategic moat." This is the second great misconception.

Export controls on high-end silicon are a sieve. Smuggling rings in Shenzhen are moving thousands of units through shell companies in Dubai and Singapore. Even if you could perfectly wall off the hardware, the software efficiency is scaling faster than the hardware restrictions can keep up.

Consider the "Chinchilla scaling laws." Research from DeepMind showed that most models are actually bottlenecked by data, not just raw compute. As we move toward "small language models" (SLMs) that punch way above their weight class, the $40,000 GPU becomes less of a gatekeeper. If a state actor can achieve GPT-4 level performance on a cluster of consumer-grade gaming cards through clever quantization and distillation, your hardware blockade is a historical footnote.

Security is a Process Not a Product

The "Security" crowd is just as delusional as the "Openness" crowd. They think they can "air-gap" intelligence.

I’ve seen how this plays out in defense tech. You build a secure facility, you revoke the GitHub access of anyone with a foreign passport, and you sit back thinking you’re safe. Meanwhile, your top-tier researchers—the ones who actually know how the weights are shifted—are being headhunted with seven-figure salaries or targeted by sophisticated social engineering.

The "secret" of AI isn't a line of code. It’s the "recipe" for the training run. It’s the specific way you cleaned the data, the exact learning rate schedule, and the proprietary reinforcement learning from human feedback (RLHF) techniques. You cannot secure a recipe when the chefs are constantly moving between kitchens.

- Fact: Over 60% of top-tier AI researchers working in the US were born outside the country.

- Fact: The majority of these researchers receive their foundational training in institutions that the US government considers "adversarial."

To "secure" AI in the way the hawks want, you would have to dismantle the very pipeline of talent that made the US the leader in the first place. You’d have to turn Silicon Valley into a classified military outpost. The moment you do that, the talent leaves. They go to London, Paris, or Dubai. You end up with a very secure, very lonely, and very mediocre AI industry.

The Myth of the Neutral Platform

People often ask: "Can't we just build a neutral international body to oversee AI development?"

This is the most dangerous "People Also Ask" trope in existence. It assumes that AI is like nuclear energy. It isn't. Nuclear material is hard to refine, hard to hide, and has a very specific signature. AI is math. You cannot regulate math without regulating thought.

Any international body would be paralyzed by the same gridlock that renders the UN ineffective in modern kinetic conflicts. China will never agree to Western "safety" standards that prioritize liberal democratic values. The US will never agree to "transparency" standards that require revealing the inner workings of proprietary models.

The "balance" is a stall tactic used by companies that are too big to innovate and too scared to compete. They want regulation because regulation is a barrier to entry for the 19-year-old in a garage who might actually build something better than a 175-billion parameter parrot.

Stop Asking if We Should Collaborate

The question isn't whether we should collaborate with "adversaries." We are already collaborating every time a paper is uploaded to arXiv.

The real question is: Why are we so bad at winning the race we started?

We are hamstrung by a weird mix of corporate greed and performative ethics. While US companies spend months "red-teaming" a chatbot to make sure it doesn't say anything offensive, Chinese firms are integrating AI into their industrial supply chains, their surveillance grids, and their electronic warfare suites.

We are worried about a chatbot's feelings; they are worried about the chatbot's throughput.

The Strategy of Extreme Asymmetry

Instead of seeking "balance," we need to embrace asymmetry.

- Abandon the "Frontier" obsession: Stop trying to build one giant model to rule them all. That’s a single point of failure. The future is a decentralized swarm of specialized agents. Harder to steal, harder to kill.

- Weaponize Open Source: If we are going to have open source, use it as a tool of cultural and technological projection. Flood the zone with models that are inherently biased toward Western computational structures. Make the "default" state of AI something that an autocratic regime finds impossible to contain.

- Intellectual Property is a Liability: In a world where AI can reverse-engineer code in seconds, your patents are worthless. The only defense is the speed of iteration. If your "security" slows your iteration cycle by 20%, you are handing the keys to the kingdom to anyone who moves at full speed.

The Cost of the Middle Ground

There is a massive downside to this contrarian approach: it’s chaotic. It’s messy. It involves admitting that the era of "American Exceptionalism" in tech is over and the era of "Global Darwinism" has begun.

By trying to "strike a balance," we are choosing a slow death by a thousand compliance checks. We are making our tech more expensive, less accessible, and more prone to being leapfrogged.

Imagine a scenario where the US implements strict licensing for any model over a certain compute threshold. The big players (Google, OpenAI, Anthropic) love this. It kills their competition. But it doesn't stop China. It just ensures that the only people building high-end AI in the West are three or four massive, slow-moving corporations. Meanwhile, the rest of the world—including our adversaries—is iterating in the wild.

In five years, we will look back at these "balance" articles as the suicide notes of a dominant tech power. You don't balance a race. You run it.

Stop looking for the middle ground. There is no middle ground in an exponential growth curve. You are either at the front, or you are the pavement.

The "balance" isn't a strategy; it’s an admission of defeat wrapped in the language of diplomacy. Pick a side: either lock it all down and watch the talent flee, or open it all up and out-innovate the thieves. Doing both is just making it easy for the people who want to replace you.

Build faster. Everything else is just noise.